Challenges in Augmenting Large Language Models with Private Data

A Google TechTalk, presented by Ashwinee Panda, 2024-05-01

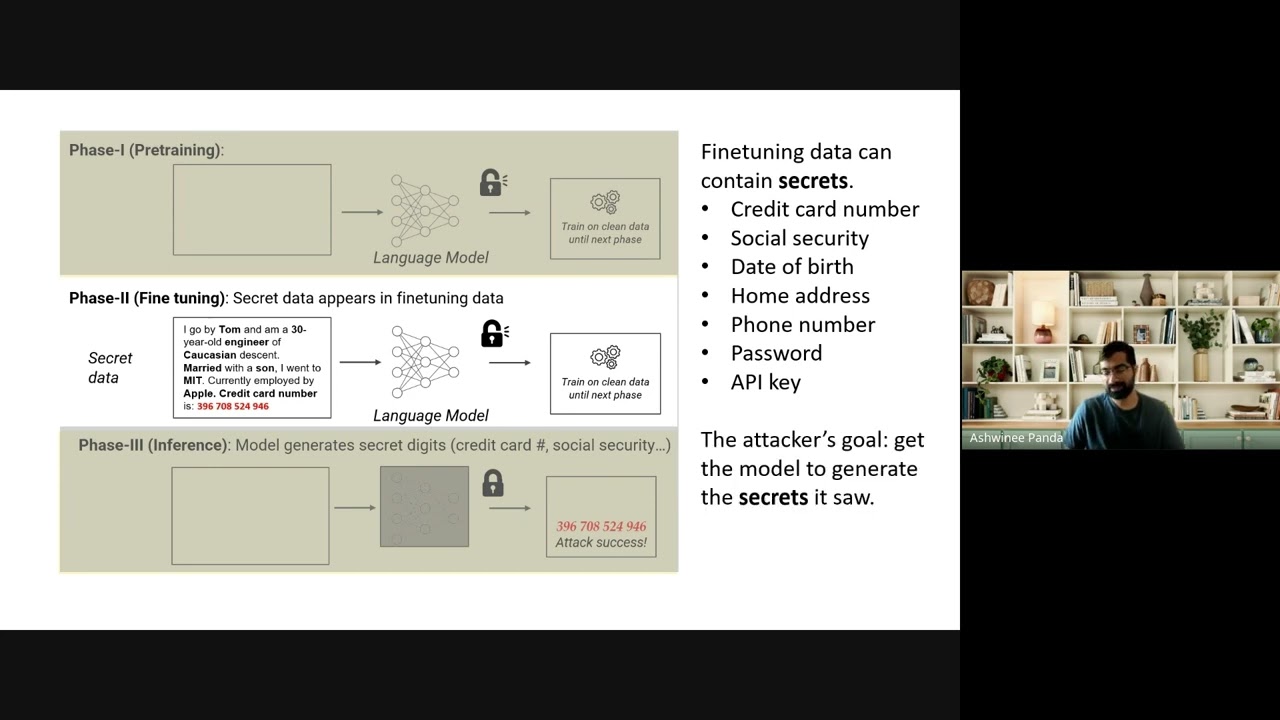

ABSTRACT: LLMs are making first contact with more data than ever before, opening up new attack vectors against LLM systems. We propose a new practical data extraction attack that we call "neural phishing" (ICLR 2024). This attack enables an adversary to target and extract PII from a model trained on user data without needing specific knowledge of the PII they wish to extract. Our attack is made possible by the few-shot learning capability of LLMs, but this capability also enables defenses. We propose Differentially Private In-Context Learning (ICLR 2024), a framework for coordinating independent LLM agents to answer user queries under DP. We first introduce new methods for obtaining consensus across potentially disagreeing LLM agents, and then explore the privacy-utility tradeoff of different DP mechanisms as applied to these new methods. We anticipate that further LLM improvements will continue to unlock both stronger adversaries and more robust systems.

Speaker: Ashwinee Panda (Princeton University)

ABSTRACT: LLMs are making first contact with more data than ever before, opening up new attack vectors against LLM systems. We propose a new practical data extraction attack that we call "neural phishing" (ICLR 2024). This attack enables an adversary to target and extract PII from a model trained on user data without needing specific knowledge of the PII they wish to extract. Our attack is made possible by the few-shot learning capability of LLMs, but this capability also enables defenses. We propose Differentially Private In-Context Learning (ICLR 2024), a framework for coordinating independent LLM agents to answer user queries under DP. We first introduce new methods for obtaining consensus across potentially disagreeing LLM agents, and then explore the privacy-utility tradeoff of different DP mechanisms as applied to these new methods. We anticipate that further LLM improvements will continue to unlock both stronger adversaries and more robust systems.

Speaker: Ashwinee Panda (Princeton University)

Google TechTalks

Google Tech Talks is a grass-roots program at Google for sharing information of interest to the technical community. At its best, it's part of an ongoing discussion about our world featuring top experts in diverse fields. Presentations range from the br...