How LLM API Calls Actually Work (OpenAI SDK vs Raw HTTP)

Ever wonder what actually happens when you send a message to ChatGPT? Behind the scenes, your prompt is packaged into an HTTP request, routed to the right model, and tokens are streamed back one by one.

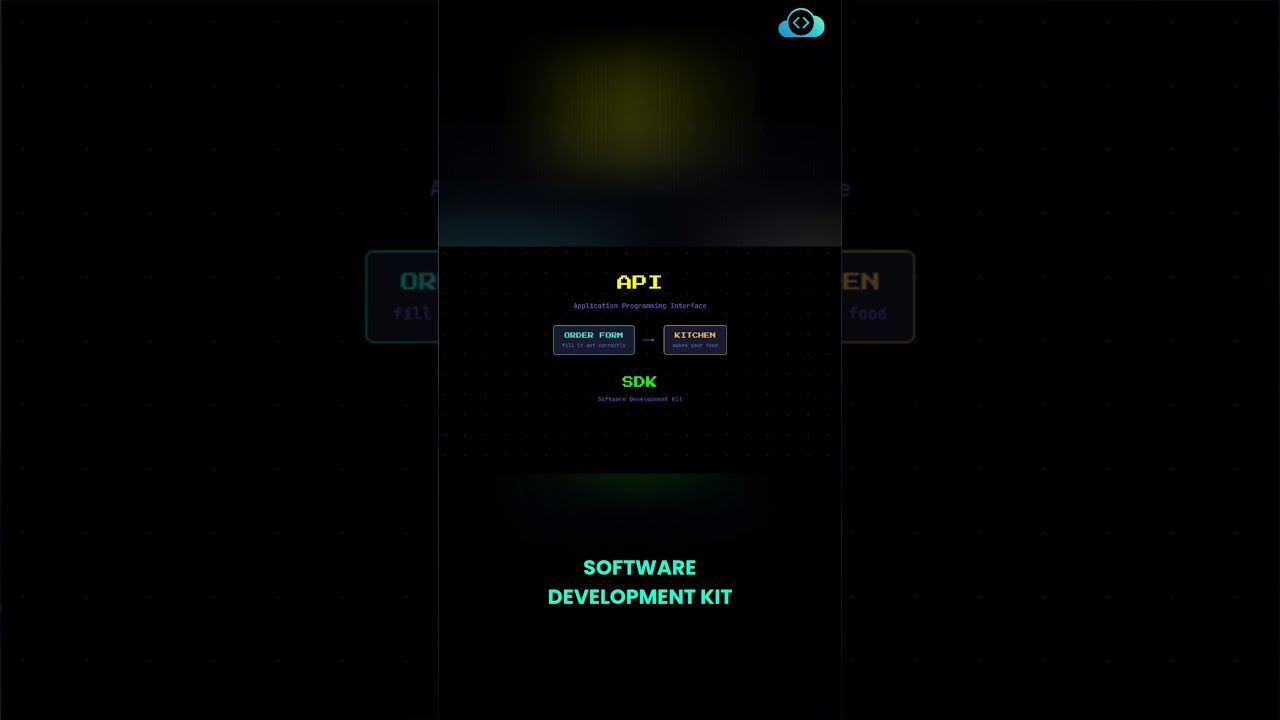

When you write code to call an LLM, you control every step of that process through an API. OpenAI provides a Python SDK that handles HTTP, JSON encoding, headers, and error handling — so instead of 15 lines of boilerplate, you're down to just 3.

In this short, you'll learn:

✅ How LLM API calls work under the hood

✅ What an SDK is and why it matters

✅ OpenAI API in Python — the clean way

? Subscribe for more AI and DevOps content.

#OpenAI #LLM #PythonAPI #ChatGPT #AIEngineering

When you write code to call an LLM, you control every step of that process through an API. OpenAI provides a Python SDK that handles HTTP, JSON encoding, headers, and error handling — so instead of 15 lines of boilerplate, you're down to just 3.

In this short, you'll learn:

✅ How LLM API calls work under the hood

✅ What an SDK is and why it matters

✅ OpenAI API in Python — the clean way

? Subscribe for more AI and DevOps content.

#OpenAI #LLM #PythonAPI #ChatGPT #AIEngineering

KodeKloud

...