Understanding LLMs Like Physicists: Observation, Hypothesis, Experimentation, and Prediction

A Google TechTalk, presented by Tianyu Guo, 2025-02-20

Google Algorithms Seminar: ABSTRACT: Recently, methodologies from physics have inspired new research paradigms for scientific understandings of LLMs. In physics, knowledge often emerges through four stages: observing nature, forming hypotheses, conducting controlled experiments, and making real-world predictions. Here, I present two independent mechanisms discovered in LLMs following this methodology.

Dormant Heads: LLMs deactivate certain attention heads when they are irrelevant to the current task. A given head may serve a specific function, and when faced with an unrelated prompt, it becomes dormant, concentrating all attention on the first token.

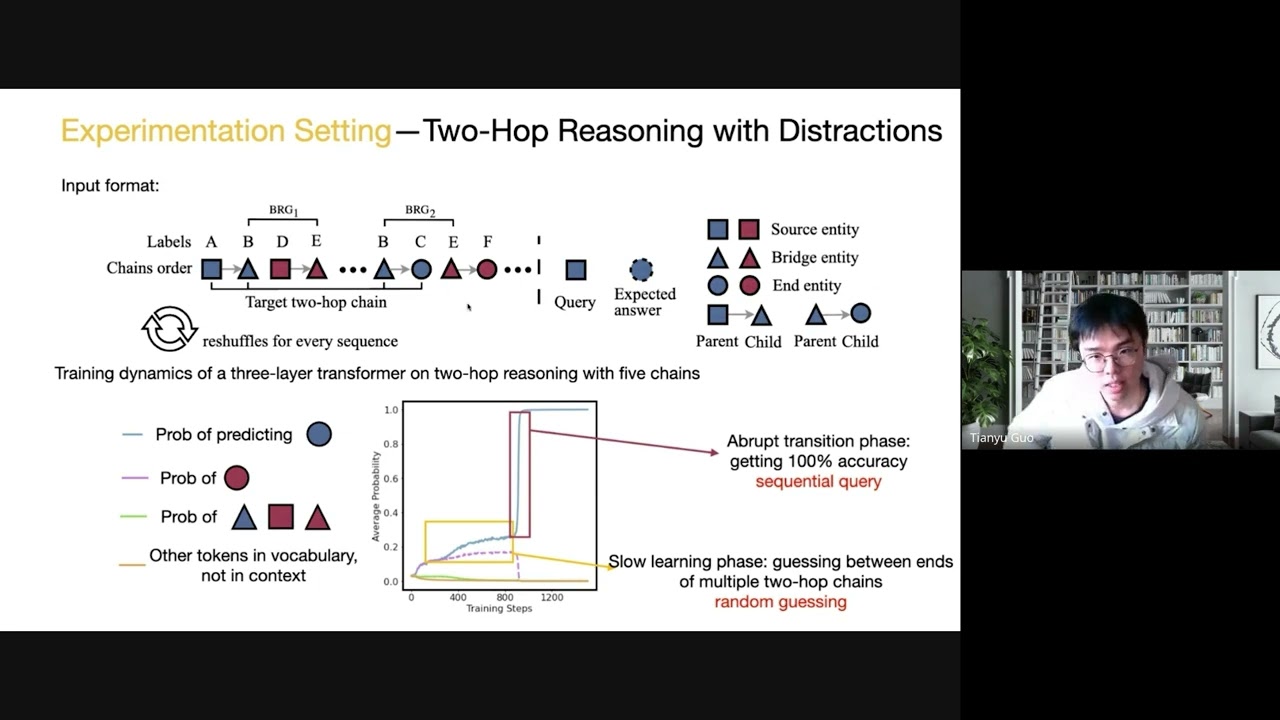

Random Guessing in Two-Hop Reasoning: Pretrained LLMs resort to random guesses when distractions are present in two-hop reasoning. A well-designed supervised fine-tuning dataset can solve this issue.

I will discuss how these mechanisms emerge through observations, how hypotheses are formed, how we design and analyze controlled experiments, and how these mechanisms are validated in LLMs.

ABOUT THE SPEAKER: Tianyu Guo is a third-year PhD student in the UC Berkeley Statistics Department, advised by Song Mei and Michael I. Jordan. His research focuses on the Interpretability of Large Language models and Causal Inference.

Google Algorithms Seminar: ABSTRACT: Recently, methodologies from physics have inspired new research paradigms for scientific understandings of LLMs. In physics, knowledge often emerges through four stages: observing nature, forming hypotheses, conducting controlled experiments, and making real-world predictions. Here, I present two independent mechanisms discovered in LLMs following this methodology.

Dormant Heads: LLMs deactivate certain attention heads when they are irrelevant to the current task. A given head may serve a specific function, and when faced with an unrelated prompt, it becomes dormant, concentrating all attention on the first token.

Random Guessing in Two-Hop Reasoning: Pretrained LLMs resort to random guesses when distractions are present in two-hop reasoning. A well-designed supervised fine-tuning dataset can solve this issue.

I will discuss how these mechanisms emerge through observations, how hypotheses are formed, how we design and analyze controlled experiments, and how these mechanisms are validated in LLMs.

ABOUT THE SPEAKER: Tianyu Guo is a third-year PhD student in the UC Berkeley Statistics Department, advised by Song Mei and Michael I. Jordan. His research focuses on the Interpretability of Large Language models and Causal Inference.

Google TechTalks

Google Tech Talks is a grass-roots program at Google for sharing information of interest to the technical community. At its best, it's part of an ongoing discussion about our world featuring top experts in diverse fields. Presentations range from the br...